7 AI Agent Orchestration Platforms for LLM Applications (2024 Review)

Compare 7 leading AI agent frameworks for LLM applications across memory models, tool architectures, and deployment patterns. Includes OpenClaw, LangGraph, CrewAI, and more.

TL;DR

- Evaluate platforms on memory persistence, tool integration depth, and channel support before committing

- OpenClaw provides autonomous, long-running agents with native Telegram channels

- LangGraph and Temporal excel at reliable workflow orchestration with state recovery

- Most frameworks require Docker/Kubernetes for production; managed hosting simplifies this

Platform Comparison Matrix

Select the right framework by matching capabilities to your agent's requirements.

| Platform | Memory Model | Tool Use | Native Channels | Deployment | Best For |

|---|---|---|---|---|---|

| OpenClaw | Episodic + Semantic | Plugin Marketplace | Telegram | Docker Container | Autonomous 24/7 agents |

| LangGraph | Checkpoint State | Function Calling | None | Python Process | Complex state machines |

| CrewAI | Context Window | Python Decorators | None | Python Script | Role-based agent teams |

| LlamaIndex Workflows | Event Store | Callback Handlers | API Gateway | Python Service | RAG-heavy knowledge agents |

| Autogen | Group Chat Buffer | Code Execution | None | Python Process | Code generation tasks |

| Semantic Kernel | Volatile + Persistent | Native Plugins | Teams, Outlook | Multi-language SDK | Enterprise Microsoft stack |

| Temporal | Event Sourcing | Activity Functions | None | Self-hosted Cluster | Mission-critical reliability |

OpenClaw (Clawdbot)

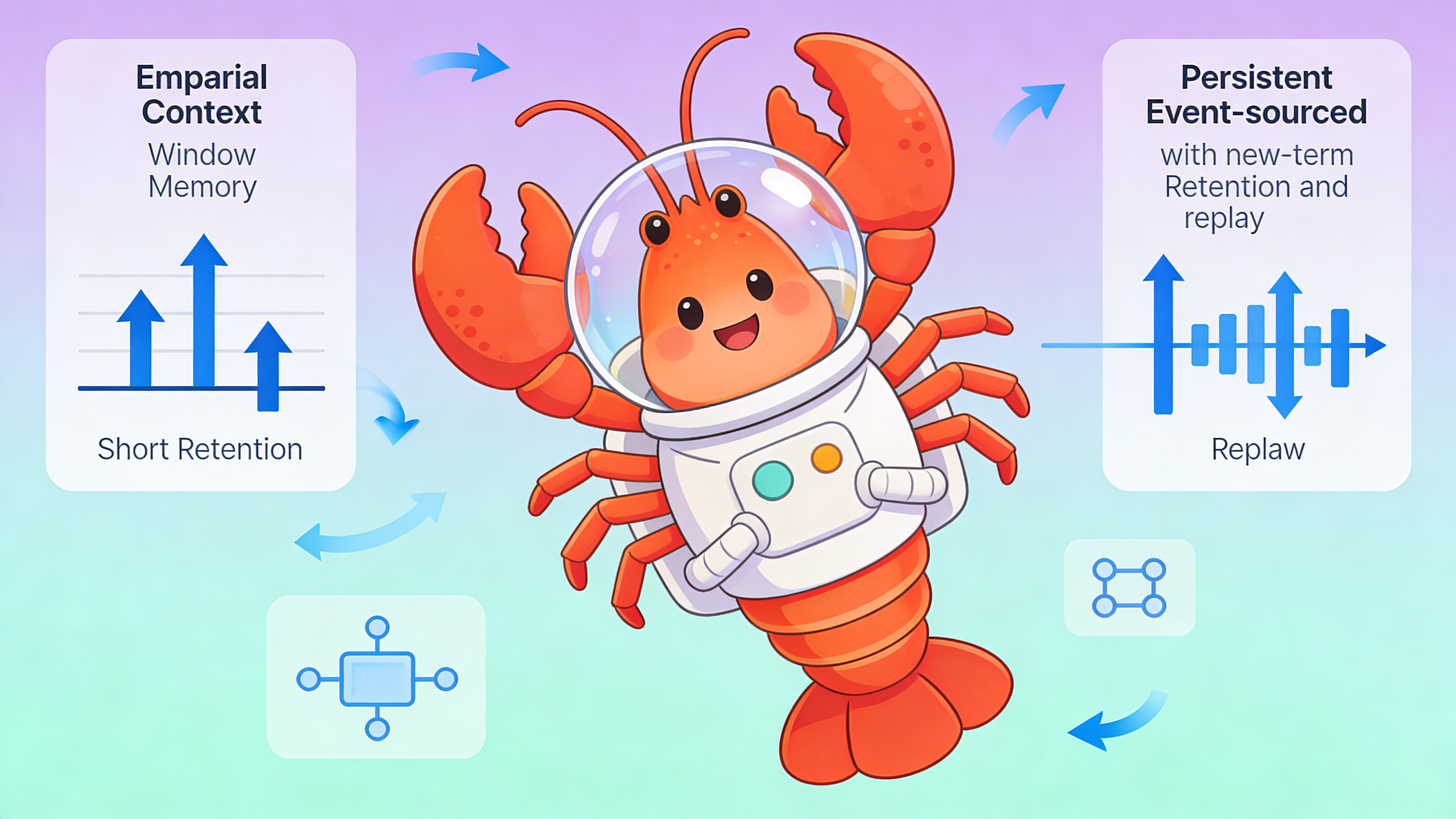

OpenClaw is purpose-built for autonomous agents that operate continuously across days or weeks. Its memory system retains episodic logs and semantic vector embeddings across conversations.

Key capabilities include a plugin marketplace for tool reuse, built-in rate limiting, and native Telegram integration.

- Telegram bot token authentication via OPENCLAW_GATEWAY_TOKEN

- Persistent memory backed by SQLite or PostgreSQL

- Embeddable UI for human-in-the-loop oversight

- Supports 2,000+ messages/month on starter tiers

OpenClaw Configuration Example

# config.yaml - OpenClaw agent definition

agent:

name: "support-coach"

model: "gpt-4-turbo-preview"

memory:

type: "hybrid"

episodic_db: "sqlite:///claw_memory.db"

semantic_retention_days: 30

channels:

telegram:

enabled: true

webhook_path: "/webhook/telegram"

plugins:

- id: "web_search"

version: "1.2.0"

config:

max_results: 5

rate_limits:

messages_per_minute: 10

tokens_per_hour: 50000

# Deploy via easyclawd.com for managed hosting without infrastructure setupLangGraph

LangGraph provides fine-grained control over agent state through directed graphs. Each node represents a tool or LLM call; edges define transition logic and conditional branching.

Checkpointing enables pause/resume workflows and human review points.

- Stateful graphs with semantic versioning

- Built-in persistence for long-running tasks

- Streaming support for real-time UI updates

- Integration with LangSmith for observability

LangGraph Setup

Install and create a state graph:

pip install langgraph

# Initialize project with checkpointing

langgraph new my-agent --template react-agent

cd my-agent

# Set environment variables

export LANGCHAIN_TRACING_V2=true

export LANGCHAIN_API_KEY="your-key"

# Run with persistence

langgraph dev --port 8000 --db-uri sqlite:///checkpoints.dbCrewAI

CrewAI structures agents around roles and delegation. Define a crew with a manager agent that assigns tasks to worker agents based on capabilities.

Role-based prompts and tool bindings enforce separation of concerns.

| Component | Definition Method | Execution Model |

|---|---|---|

| Agent Role | Python class with role/goal/backstory | Initialized at crew creation |

| Task | Decorated function with expected_output | Queued by manager |

| Tool | @tool decorator or import | Bound to specific agent |

| Process | Sequential or hierarchical | Determines delegation flow |

LlamaIndex Workflows

LlamaIndex Workflows uses an event-driven architecture where components react to events emitted by other components.

Ideal for agents that ingest documents, generate embeddings, and trigger downstream actions.

- Event bus decouples producers from consumers

- Built-in retry and error handling policies

- Native vector store integration

- Observable via LlamaIndex callback system

Autogen

Autogen from Microsoft Research focuses on conversable agents that can execute code in dockerized environments.

Group chat patterns enable multi-agent debate and consensus-building.

# Minimal Autogen group chat setup

from autogen import AssistantAgent, UserProxyAgent, GroupChat

config_list = [{"model": "gpt-4", "api_key": "..."}]

assistant = AssistantAgent("coder", llm_config={"config_list": config_list})

user_proxy = UserProxyAgent("executor", code_execution_config={"use_docker": True})

groupchat = GroupChat(agents=[assistant, user_proxy], messages=[], max_round=5)

# Caution: Code execution requires tight sandbox controlsSemantic Kernel

Semantic Kernel provides planners that automatically generate execution strategies based on available plugins and the user ask.

Memory connectors support multiple vector databases via a unified interface.

| Planner Type | Use Case | Complexity |

|---|---|---|

| Basic Planner | Single-step tool calls | Low |

| Stepwise Planner | Multi-step reasoning | Medium |

| Action Planner | Dynamic function selection | Medium |

| Sequential Planner | Predefined workflows | High |

Temporal

Temporal brings durable execution to agent workflows. Workbooks survive process crashes, deployments, and infrastructure failures.

Use Temporal when an agent task must complete with exactly-once semantics.

⚠️ Security Warning: Exposing agent endpoints without token authentication risks prompt injection and unauthorized tool execution. Always set OPENCLAW_GATEWAY_TOKEN or equivalent auth headers. Never log LLM prompts containing API keys—use sanitized observability pipelines.

Deployment Patterns

Production deployment requires orchestration, observability, and scaling strategies.

| Pattern | Framework Support | Infrastructure Needed |

|---|---|---|

| Single Container | OpenClaw, CrewAI | Docker, CI/CD |

| Serverless Function | LangGraph (via LangServe) | AWS Lambda/Vercel |

| Stateful Cluster | Temporal, Semantic Kernel | Kubernetes, Redis |

| Managed SaaS | OpenClaw via EasyClawd | None (fully hosted) |

See Also

- OpenClaw Documentation — https://github.com/openclaw/openclaw/wiki

- LangGraph State Persistence Guide — https://langchain-ai.github.io/langgraph/persistence/

- AI Agent Security Best Practices — https://owasp.org/www-project-top-10-for-large-language-model-applications/

Ready to deploy your OpenClaw AI assistant?

Skip the complexity. Get your AI agent running in minutes with EasyClawd.

Deploy Your AI Agent